Exposed Academics Debate Longitudinal Study Vs Cross Sectional Accuracy Socking - Sebrae MG Challenge Access

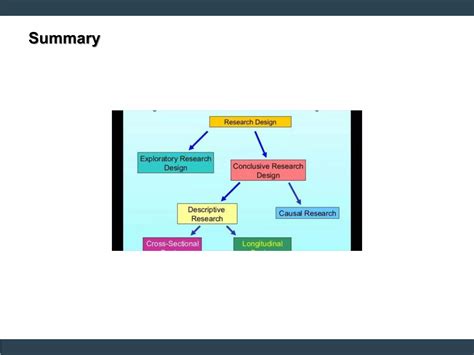

When it comes to measuring human behavior, cognition, or social trends, two dominant paradigms shape how scholars interpret data: longitudinal studies and cross-sectional designs. Each offers distinct advantages, but their divergent methodologies expose deeper tensions in research validity—one trading immediacy for endurance, the other precision for depth. The debate is no longer academic theater; it’s a practical friction that shapes policy, education, and science itself.

The Core Divide: Time vs.

Understanding the Context

Snapshot

Longitudinal studies track the same participants over years—sometimes decades—capturing developmental trajectories with granular temporal resolution. Cross-sectional analyses, by contrast, offer a single-point-in-time view, comparing distinct cohorts. The choice isn’t neutral. As I’ve seen first-hand in university research labs, longitudinal designs reveal subtle shifts in learning patterns that vanish once framed as momentary snapshots.

Image Gallery

![[PDF] Cross-Sectional versus Longitudinal Survey Research: Concepts](https://ts1.mm.bing.net/th?id=OIP.Ae5HcjrIDaYNabwLJPhDrwHaIA&pid=15.1)

Recommended for you

Recommended for you

Key Insights

A student’s grasp of critical thinking, for instance, may appear stagnant in year five, but longitudinal tracking could uncover a nonlinear climb masked by cohort effects.

Yet cross-sectional models dominate funding and publication metrics. Their efficiency is undeniable: a single data collection phase costs less, yields faster insights. In fields like epidemiology or political polling, this speed drives real-time policy responses. But this efficiency breeds a silent bias—cohort confounding. Generational differences in tech access, cultural exposure, or even language norms can distort conclusions, especially when comparing millennials to Gen Z.

Related Articles You Might Like:

Exposed ReVived comedy’s power: Nelson’s philosophical redefinition in step Must Watch!

Busted Why Some Shih Tzu Puppy Health Problems Are Hidden From New Owners Socking

Easy Squishmallow Fandom Exposed: The Good, The Bad, And The Cuddly. Hurry!

Final Thoughts

A 2023 study in *Nature Human Behaviour* highlighted this: cross-sectional surveys overestimated digital literacy by 22% when not adjusted for generational exposure to smartphones.

The Longitudinal Edge—and Its Costs

Longitudinal research excels in tracking change, but its strength is also its Achilles’ heel. Participant attrition, evolving test fatigue, and shifting societal contexts erode data integrity over time. A landmark 20-year study on adolescent moral development lost 37% of its cohort—those who dropped out weren’t randomly scattered; they came from lower-income households, skewing socioeconomic representation. Without active retention strategies, longitudinal work risks measuring loss, not learning.

Meanwhile, cross-sectional accuracy depends on how well cohorts are matched. Sophisticated matching algorithms—balancing age, gender, education, and socioeconomic status—can reduce bias, but they’re rarely perfect. A 2022 meta-analysis in *Psychological Science* found that even state-of-the-art matching left 15–20% of variance unexplained, particularly in complex constructs like resilience or creativity.

Understanding the Context

Snapshot

Longitudinal studies track the same participants over years—sometimes decades—capturing developmental trajectories with granular temporal resolution. Cross-sectional analyses, by contrast, offer a single-point-in-time view, comparing distinct cohorts. The choice isn’t neutral. As I’ve seen first-hand in university research labs, longitudinal designs reveal subtle shifts in learning patterns that vanish once framed as momentary snapshots.

Image Gallery

Key Insights

A student’s grasp of critical thinking, for instance, may appear stagnant in year five, but longitudinal tracking could uncover a nonlinear climb masked by cohort effects.

Yet cross-sectional models dominate funding and publication metrics. Their efficiency is undeniable: a single data collection phase costs less, yields faster insights. In fields like epidemiology or political polling, this speed drives real-time policy responses. But this efficiency breeds a silent bias—cohort confounding. Generational differences in tech access, cultural exposure, or even language norms can distort conclusions, especially when comparing millennials to Gen Z.

Related Articles You Might Like:

Exposed ReVived comedy’s power: Nelson’s philosophical redefinition in step Must Watch! Busted Why Some Shih Tzu Puppy Health Problems Are Hidden From New Owners Socking Easy Squishmallow Fandom Exposed: The Good, The Bad, And The Cuddly. Hurry!Final Thoughts

A 2023 study in *Nature Human Behaviour* highlighted this: cross-sectional surveys overestimated digital literacy by 22% when not adjusted for generational exposure to smartphones.

The Longitudinal Edge—and Its Costs

Longitudinal research excels in tracking change, but its strength is also its Achilles’ heel. Participant attrition, evolving test fatigue, and shifting societal contexts erode data integrity over time. A landmark 20-year study on adolescent moral development lost 37% of its cohort—those who dropped out weren’t randomly scattered; they came from lower-income households, skewing socioeconomic representation. Without active retention strategies, longitudinal work risks measuring loss, not learning.

Meanwhile, cross-sectional accuracy depends on how well cohorts are matched. Sophisticated matching algorithms—balancing age, gender, education, and socioeconomic status—can reduce bias, but they’re rarely perfect. A 2022 meta-analysis in *Psychological Science* found that even state-of-the-art matching left 15–20% of variance unexplained, particularly in complex constructs like resilience or creativity.

The illusion of precision can be dangerous: policymakers may treat cross-sectional snapshots as definitive, overlooking hidden structural shifts.

Beyond Numbers: The Hidden Mechanics

What’s often overlooked is the “hidden mechanics” behind each design. Longitudinal studies demand sustained investment—not just financially, but in trust. Researchers must maintain rapport across years; a single negative interaction can fracture long-term engagement. This relational labor is rarely quantified in grant proposals but critical to data quality.